Companies like Cisco/Juniper expecting all network engineer must have working knowledge on writing “Infrastructure As Code (IaC)” for network devices aka NetworkOps (NetOps) by 2022 or earlier if possible! SD-WAN/SD-Access/ACI/Firewall/NGFW/Cloud Networking – doesn’t matter whatever your networking career track is – you need to know network automation and orchestration.

I have been asked question many times regarding where to start with Ansible as a network engineer last few months. More precisely the question was always – I am a network engineer, now I want to learn network automation and orchestration with Ansible – can you please tell from “where to start”?

I try to shed some light on this; Following are my step-by-step guide on where to start with Ansible for network engineers. This step-by-step guide comes with following:

- Ansible installation on Linux (Ubuntu).

- Playing with Ansible playbooks including three (03) tasks;

- returning “show run” from Cisco IOS devices

- configure network interface with IPAddr and OSPF 100″ on Cisco IOS devices

- capture “show run” output and save to a file as backup for Cisco IOS devices

- This also includes where to start with Linux system and an text editor on the Day 1 if you do not have any prior Linux knowledge.

Ansible IaC codes are based on YAML; YAML is the easiest one to start with if you have no prior coding skill.

Part 1: Learn Linux and at least one CLI text file editor (optional)

If you already have some Linux experience – you can start from Part 2.

Why Linux? Most of the automation and orchestration tools preferred OS platform is Linux – that’s why (I can tell you another 101 reasons on why you should learn Linux as a network engineer).

Download a copy of Ubuntu/Debian/CentOS ISO image. Install the OS on VMware or VirtualBox. To start with – follow the “default” next -> next -> finished installation instructions. If the installer ask you to create an user account – create it. Aim to learn customised advanced installation later on once you have some confidence on Linux; Google it for details.

Ok, your installation is done; you have logged into you newly installed Linux instance – what is next on the day 1?

Well, let’s start with Linux file system.

If you look at a Windows file system, you already know how it looks like! You understand the meaning of C:\ drive, D:\ drive, C:\Program Files, C:\Windows, C:\Windows\System32 etc. The same way you need to know Linux file systems; everything within Linux are files and directories – let’s have a look into “key” Linux file systems following table –

| Linux File System Name |

What is does? |

Similar Windows File System |

| “/” |

Linux file system is based on hierarchical fashion. “/” this is the root of all Linux file system. |

This is not visible within Windows. |

| “/etc” |

Host specific system configuration directory |

C:\Windows\System32 |

| “/lib” |

Shared Library files |

C:\Windows\System32 – DLL files |

| “/var/logs” |

Log files for system |

Event Viewer events |

| “/home/**” |

User home directories |

C:\Users\** |

| “/usr” |

User utilities and application |

C:\Program Files\** |

| “/boot” |

Boot partition or boot file system |

Boot drive on Windows; most of the case it is “C:\” |

| “/tmp” |

Temporary files |

C:\Temp |

| “/bin” |

Essential user binary files/command file |

C:\Windows\System32 – EXE files |

| “/opt” |

Add-on application software package directory |

D:\Program Files – if you have any |

| “/mnt” |

Temporary mount directory – CD/DVD |

CD drive/DVD drive |

How to browse Linux file systems on CLI? Very simple, following –

$cd /name_of_directory ;example - $cd /var/log; $cd /etc/network

$pwd ;this command will show where you are – within what directory

$ls ;this command will list the file within a directory

$ls -la ;this command will list the files in a directory with details

Few useful Linux CLI commands to start on the day 1 –

| Command Name |

What is does |

| who |

Show who are the logged in users at the moment |

| whoami |

Show username for the current working session |

| uname -a |

Show Linux kernel version |

| ifconfig |

Show NIC config with IP address |

| netstat -na |

Show all open and connected TCP/UDP sockets |

| netstat -nr |

Show routing table |

| cat /dir/filename |

Show the content of a file |

| tail /var/log/filename |

Show last few lines of a file |

It’s time for you to start Googling for more useful Linux commands; search for “20 useful Linux commands” and practice them immediately.

Let’s setup networking on the new Linux – I mean setting up a network interface with IPAddr/SubnetMask/DefaultGW/DNS etc.

You need to edit and add your network specific details onto the network interface configuration file. Well – probably you don’t know how to edit a file on Linux CLI? There so many text file editor available for Linux/Unix – my favourite one is “vi”; let’s have a look how to start with “vi” on the day 1:

| “vi” commands/options |

What is does? |

| $sudo vi newfilename |

This command will create a new file if the file is not already existed. Example:

$vi /tmp/myfirstfile.txt |

| $sudo vi /etc/hosts |

This command will open the file “/etc/hosts” – this file already exist. |

| Press “i” |

“i” means insert/edit mode; you are now allowed to start typing in the file. Make sure to open the file first using “vi” as mentioned above. |

| Press “esc” |

This will put back the file in read mode from any other modes such as insert/edit/append/search. You can only read and scroll in this mode |

| Press “a” |

“a” is append and allow edit; move cursor to a character > press a > then start writing new |

| Press “r” |

“r” is for replace a character; more cursor to a character > then press r > then press new character |

| Type in “:10” |

This will take you to “line” number 10; go to line 10 |

| Type in “/typesomething” |

“/hostname” this will search for “hostname” in the file |

| Type in “:w” |

write/save; make sure to press “esc” first then “:w” |

| Type in “:wq” |

write/save and quit/close the file |

| Type in “:wq!” |

write/save and quit/close the file in forced mode |

| Type in “:q!” |

force quit/close |

Network configuration files are stored in the “/etc/netplan/” directory on the latest Ubuntu Linux; the file name is something like “50-cloud-init.yaml” – make sure it is the “.yaml” extension file.

To configure the new Linux NIC – you need to do the following –

Step1: get the NIC name and number (ensXX)

$sudo ifconfig

This will display the network interface card details – let’s say the name and number is “ens33”:

Step2: configure the NIC with IP address details by editing the YAML config file

Let’s say we configure “ens33” with “DHCP auto IP: config as following –

$sudo vi /etc/netplan/50-cloud-init.yaml

Enter the following details:

network:

version: 2

renderer: networkd

ethernets:

ens33:

dhcp4: true

If we want to add a “static IP address” – the do the following –

$sudo vi /etc/netplan/50-cloud-init.yaml

Enter the following details –

network:

version: 2

renderer: networkd

ethernets:

ens33:

addresses:

- 192.168.101.201/24

gateway4: 192.168.101.254

nameservers:

search: [test.local, otherdomain]

addresses: [8.8.8.8, 8.8.4.4]

Now you need to apply the new configurations; enter the following commands –

$sudo netplan try ;this command should return “Configuration accepted.”

$sudo netplan apply ;this command will apply the new setting based on the file

$sudo ifconfig ;this command will show you the IP address details

Do a ping to a remote machine.

Part 2: Get Ansible installed on your new Linux system

As a part of learning Linux sysadmin tasks – you will now learn package management! This is basically how to install/uninstall/update new software packages on a Linux system. Different Linux distributions (Redhat/Debian/Ubuntu/CentOS/…) use different tools for package management. I will show you how to install Ansible on Ubuntu/Debian based system.

There are two parts of Ansible –

- Ansible Control Machine; this is from where you store you Ansible configuration files and control target systems

- Ansible target machine or target nodes; Ansible support wide range of targets including Linux, Windows, Cisco, Juniper, Palo, F5, AWS, GCP, Azure, etc….

You need to install Ansible “only” on the controller machine; NO need to install Ansible in the target nodes. Ansible is agentless – so no Ansible client software provided for a target node.

Ansible installation details are available here on the official document site – https://docs.ansible.com/ansible/latest/installation_guide/intro_installation.html

Package management on Ubuntu is done via “apt” utility; we will install Ansible using “apt” on our new Linux machine; following are the commands.

$sudo apt-get update ;this will update the package list on the Linux

$sudo apt-get install software-properties-common ; this will install software name “software-properties-common”

$sudo apt-add-repository --yes --update ppa:ansible/ansible ;this will add new software repository

$sudo apt-get install ansible ;this installs the ansible packages along with its dependencies

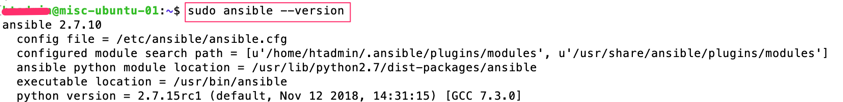

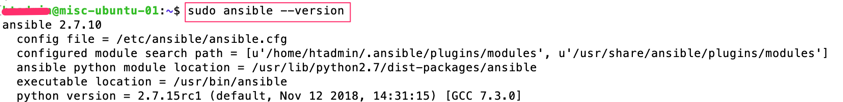

Enter command “$sudo ansible –version” to verify your installation.

Part 3: Let’s play with Ansible – Final Part

In this part I am covering how to use manage remote networking devices using Ansible.

There are two key files when playing with Ansible:

- Inventory file ; this file contains list of remote devices

- YAML playbook file/files; these files contain list of “tasks” to be applied onto the remote devices listed on the inventory file

YAML playbook “tasks” are defined within Ansible “modules”; let’s see the example following:

- you want to send “show” commands to Cisco IOS devices, so you need “ios_command” module installed in your Ansible

- you want send configuration commands to Cisco IOS devices to configured interfaces/routing, so you need “ios_config” module installed in your Ansible

If you don’t have “ios_config” module installed – then you will not be able to configure Cisco IOS devices using Ansible! Good news is default Ansible installation comes with hundreds of pre-installed modules including Cisco IOS, Cisco ASA, Juniper JUNOS, Palo Alto PanOS, AWS, GCP, Linux, Windows etc. Ansible is giving you option to create your own module in-case if you couldn’t find a module for a new type of device/software/system.

Following command list available installed modules in Ansible:

$ansible-doc -l | grep ios ;this will list all Cisco IOS modules

$ansible-doc -l | grep nxos ;this will list all Cisco NXOS modules

$ansible-doc -l | grep panos ;this will list all Palo PANOS modules

$ansible-doc -l | grep junos ;this will list all JUNOS modules

Ansible website has comprehensive details on every module; have a look at the “ios_config” module official page at -https://docs.ansible.com/ansible/latest/modules/ios_config_module.html?highlight=ios_config

Apart from the above inventory and YAML playbook file types – the main Ansible system parameters configuration file is “/etc/ansible/ansible.cfg”.

Step1: Ansible inventory file

Let’s have a look – what we can enter onto the “inventory” file; this file looks like following –

#Note – My list of Network Devices – Cisco/Juniper/Palo

csr-1000v-01 ansible_host=192.168.101.125

csr-1000v-02 ansible_host=192.168.101.126

sw-tst-01 ansible_host=192.168.101.131

sw-tst-02 ansible_host=192.168.101.132

asa-tst-01 ansible_host=192.168.101.135

[csr-routers]

csr-1000v-01

csr-1000v-02

[switches]

sw-tst-01

sw-tst-02

[asa-fws]

asa-tst-01

[routersnswitches]

csr-routers

switches

Based on the above –

- The first part is the name/identification of the remote device; “ansible_host” is the variable name with a value of an IP address to send connection requests to the remote device (this could be be FQDN or hostname instead of IP address).

- Secondly, we have created three groups – csr-routers/switches/asa-fws.

- Lastly, we put all the three groups onto a new group/big group “routernswitches”.

By default, there is another group there called “all” – this includes ALL the devices in the inventory list; you don’t need to define “all” separately.

Cool – now we have got our inventory file ready!

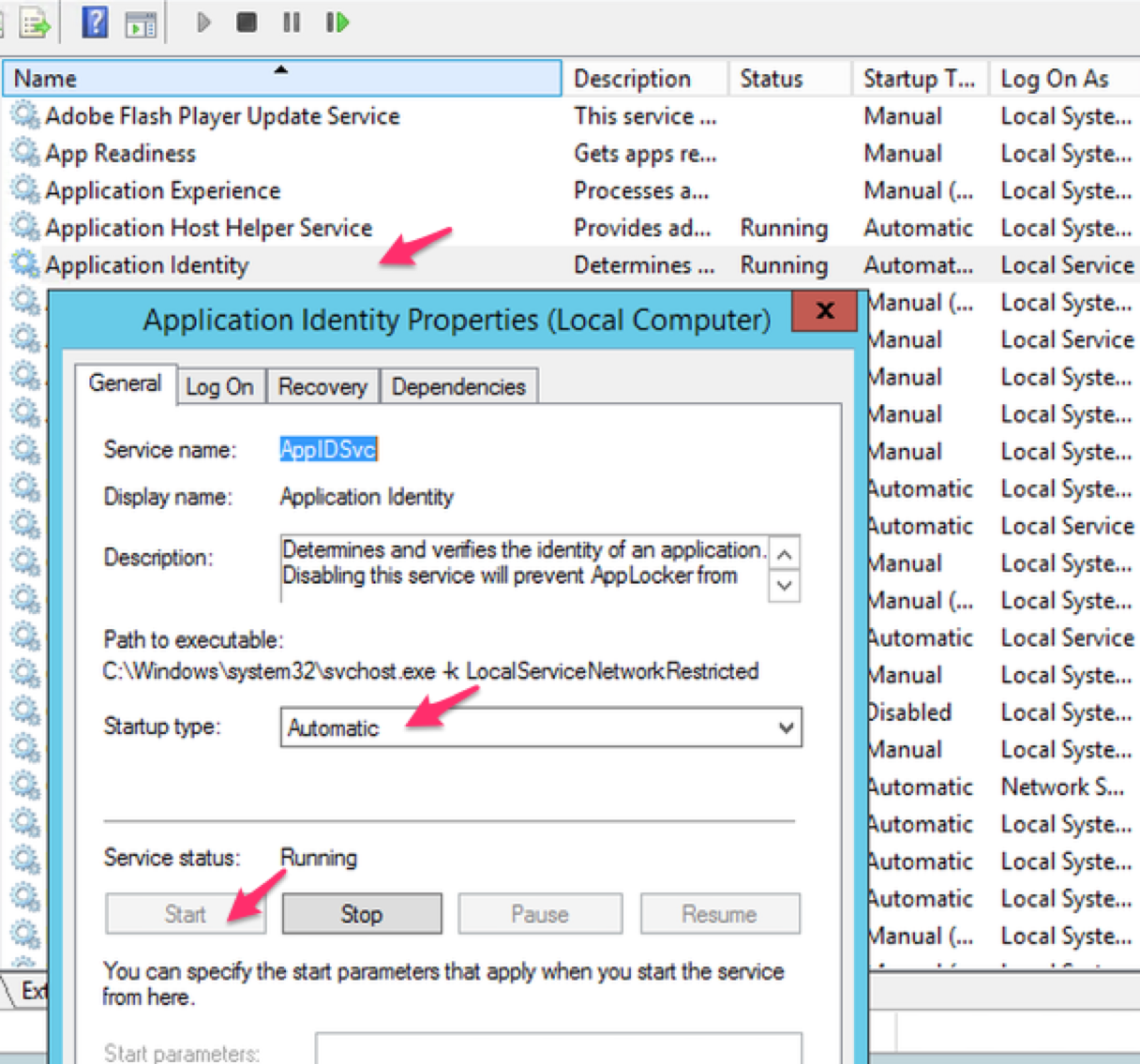

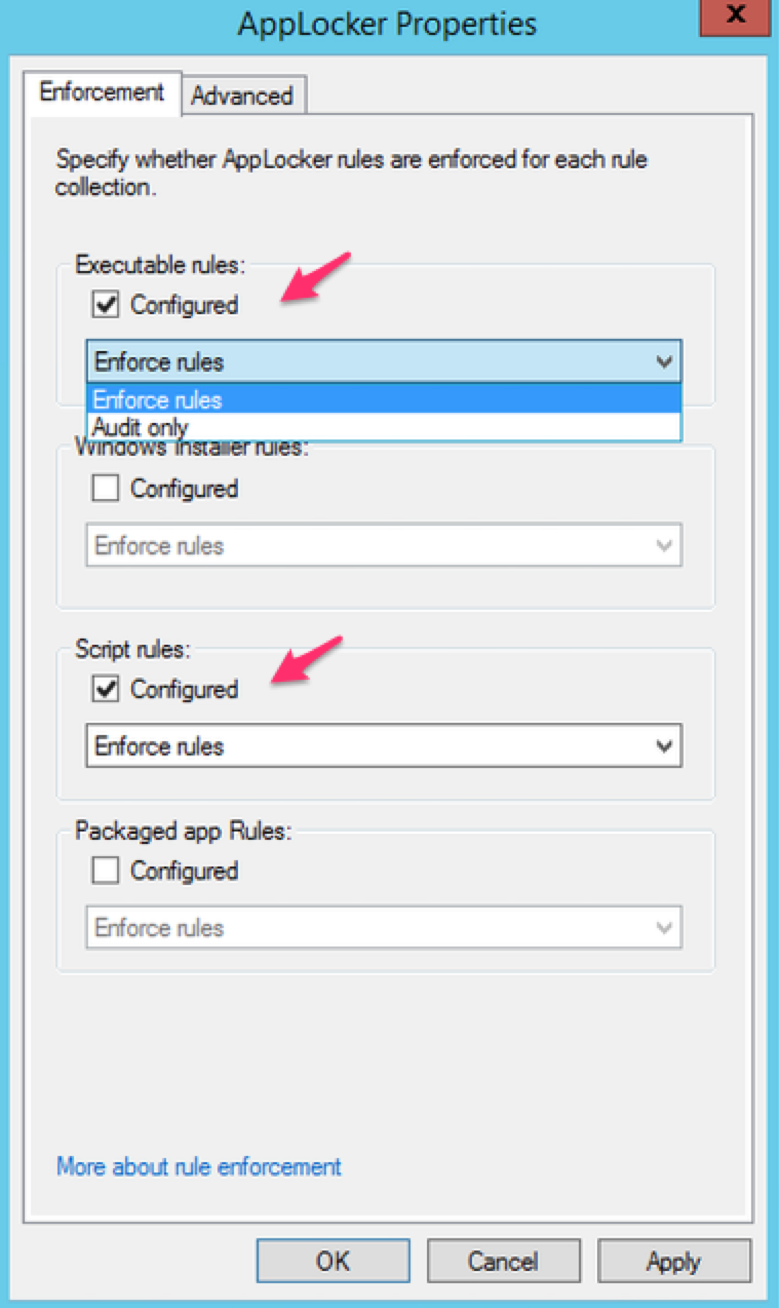

Important Note: Ansible is agentless – then how it is going to talk to the remote systems? The answer is – for Linux/Cisco IOS/NXOS/JUNOS and similar Ansible use SSH connection. For Windows based targets Ansbile use PowerShell Remote Admin windows feature!

Step2: Ansible YAML playbook file/files

Once the inventory file is ready – next step is to create Ansible playbook. Question is what is a playbook, what is it’s role? Well – a playbook contains list of actions/tasks to be performed onto the remote devices; each of the action is called a “play”. While creating a playbook you need to define the workflow of tasks in “correct order” to be automated then convert them to different “plays” within an Ansible playbook file. Playbooks are written on YAML syntax.

As a network engineer you are already familiar with what steps it takes to setup two routers with OSPF; let’s break it down into multiple tasks (aka plays) –

- Task 1: setup common parameters such as hostname/dns server/etc (Play 1)

- Task 2: setup network interfaces with IP address on both the router (Play 2)

- Task 3: setup ospf parameters on both the routers (Play 3)

- Task 4: return/display “show run” on both (Play 4)

- Task 5: return/display “show ip interface brief” (Play 5)

[Note: make sure to setup SSH and a network interface on both the routers so that Ansible controller can connect to both the above routers]

We can put together all the above plays (play 1 to 5) onto Ansible YAML playbook file and push to both the routers at the same time!

Before proceeding to YAML syntaxes – you “should” learn the following YAML items:

- YAML “directory” items

- YAML “list” items

Let’s create our first YAML script now!

Task A: Display “show run” and “show ip interface brief”

We want to display the above show from the “csr-1000v-01” and “csr-1000v-02” listed on our inventory file we have created earlier.

-

name: Test playbook to return “show” command from Cisco CSR 1000v

hosts: csr-routers

connection: local

vars:

cli:

username: cisco

password: cisco

tasks:

- name: Print running config (play1)

ios_command:

provider: "{{ cli }}"

commands:

- show run

register: show_run

- debug:

var: show_run

- name: Print ip interfaces (play2)

ios_command:

provider: "{{ cli }}"

commands:

- show ip interface brief

register: show_int

- debug:

var: show_int

Let’s do a debrief of the above YAML syntax:

| YAML item name |

What is does? |

| name: Test platbook to return… |

this is just a name to identify the YAML playbook file |

| hosts: csr-routers |

this is the host group we configured in the inventory file, this can be a single device or “all” for all the devices within the inventory file list as well |

| connection: local |

this tells where to execute this YAML playbook file, in this example we are executing this on the local Ansible controller running Linux |

| vars: cli: …. |

we define this variable for username and password to connect to the remote CSR routers, otherwise we need to define this every time we want to run a play |

| tasks: |

list of plays starts from here |

| ios_command: |

this is pre-defined Ansible module created using Python; Cisco IOS show command details are listed here in this module |

| provider: |

this tells Ansible to use the pre-defined variables (cli with username/password) while sending remote connection requests to remote device |

| command: |

the actual Cisco IOS command |

| register: |

this tells Ansible to capture the command output within Ansible in a variable name defined in the register section |

| debug: var: |

this tells Ansible to display the previously captured register value what is stored in the register variable |

Let’s create the Linux files and execute the above playbook with the inventory list –

$sudo mkdir /opt/ansible-cisco-practice

$cd /opt/ansible-cisco-practice

$sudo vi my-first-playbook-cisco.yaml ;copy and paste the above YAML contents

$sudo vi inventory.txt ;copy and paste the inventory file contents here

$sudo ansible-playbook my-first-playbook-cisco.yaml -i inventory.txt

Based on the above the “ansible-playbook” is the command name to execute a YAML playbook file; the option “i” specify the location of the inventory list file.

You might end-up seeing Ansible error message showing “unable to connect” to remote devices using SSH due to “SSH host fingerprint” issue – by default SSH validates host fingerprint for security. Two options to get this fixed as following:

- manually send a connection request to the Cisco IOS device from the Ansible controller Linux via SSH – this will add the IOS device SSH fingerprint onto the Linux on the very first connection request.

- Or update the Ansible system parameter configuration file to tell Ansible “not to validate” SSH host fingerprint; edit the “/etc/ansible/ansible.cfg” file and uncomment the following parameter and the re-run the “ansible-playbook” command with inventory file:

$sudo vi /etc/ansible/ansible.cfg

#host_key_checking = False ;remove the hash # from this line

host_key_checking = False

You should be able to see Ansible is connecting to both the Cisco CSR routers mentioned and executed Play1 and Play2 with the “show” commands outputs!

You are nearly there! Now you know how to setup Ansible and get commands executed on remote Cisco IOS devices! Congratulations!

Task B: Configure Cisco an Interface and few OSPF parameters

You want to send the following configurations to both the CSR routers:

On “csr-1000v-01”:

!

interface G2

description “connected to Router XX”

ip address 172.16.100.51 255.255.255.0

no shut

!

router ospf 100

passive-interface G1

network 172.16.100.0 0.0.0.255 area 0

!

On “csr-1000v-02”:

!

interface G2

description “connected to Router XX”

ip address 172.16.100.52 255.255.255.0

no shut

!

router ospf 100

passive-interface G1

network 172.16.100.0 0.0.0.255 area 0

!

On both “csr-1000v-01” and “csr-1000v-02”:

!

ip name-server 8.8.8.8

ip name-server 8.8.4.4

ip domain-name test.local

!

Let’s create the Ansible YAML playbook file with the above:

-

name: Test playbook – IOS Configs for Cisco CSR 1000v

hosts: csr-routers

connection: local

vars:

cli:

username: cisco

password: cisco

tasks:

- name: Configure TopLevel IOS configs DNS/DomainName on Both (Play1)

ios_config:

provider: "{{ cli }}"

lines:

- ip name-server 8.8.8.8

- ip name-server 8.8.4.4

- ip domain-name test.local

- name: Configure GE2 interface on csr-1000v-01 only (Play2)

when: ansible_host == "192.168.101.125"

ios_config:

provider: "{{ cli }}"

lines:

- description "Connected to Router XX"

- ip address 172.16.100.51 255.255.255.0

- no shutdown

parents: interface G2

- name: Configure OSPF 100 on csr-1000v-01 only (Play3)

when: ansible_host == "192.168.101.125"

ios_config:

provider: "{{ cli }}"

lines:

- network 172.16.100.0 0.0.0.255 area 0

- passive-interface G1

parents: router ospf 100

- name: Configure GE2 interface on csr-1000v-02 only (Play4)

when: ansible_host == "192.168.101.126"

ios_config:

provider: "{{ cli }}"

lines:

- description "Connected to Router XX"

- ip address 172.16.100.52 255.255.255.0

- no shutdown

parents: interface G2

- name: Configure OSPF 100 on csr-1000v-02 only (Play5)

when: ansible_host == "192.168.101.126"

ios_config:

provider: "{{ cli }}"

lines:

- network 172.16.100.0 0.0.0.255 area 0

- passive-interface G1

parents: router ospf 100

- name: Save configs on both CSRs (Play6)

ios_config:

provider: "{{ cli }}"

lines:

- do write

- name: Lastly display “show run” on both CSRs (Play7)

ios_command:

provider: "{{ cli }}"

commands:

- show run

register: show_run

- debug:

var: show_run

Let’s demystify the above YAML script –

| Play Name |

Ansible Module Used |

Target CSR Router |

| Play1: TopLevel IOS configs DNS/DomainName |

ios_config |

Both CSR routers |

| Play2: Configure GE2 interface with IP |

ios_config |

csr-1000v-01 only |

| Play3: OSPF 100 Configuration |

ios_config |

csr-1000v-01 only |

| Play4: Configure GE2 interface with IP |

ios_config |

csr-1000v-02 only |

| Play5: OSPF 100 Configuration |

ios_config |

csr-1000v-02 only |

| Play6: Save configs |

ios_config |

Both CSR routers |

| Play7: display “show run” |

ios_command |

Both CSR routers |

We have used “condition when” here to push configurations to specific routers.

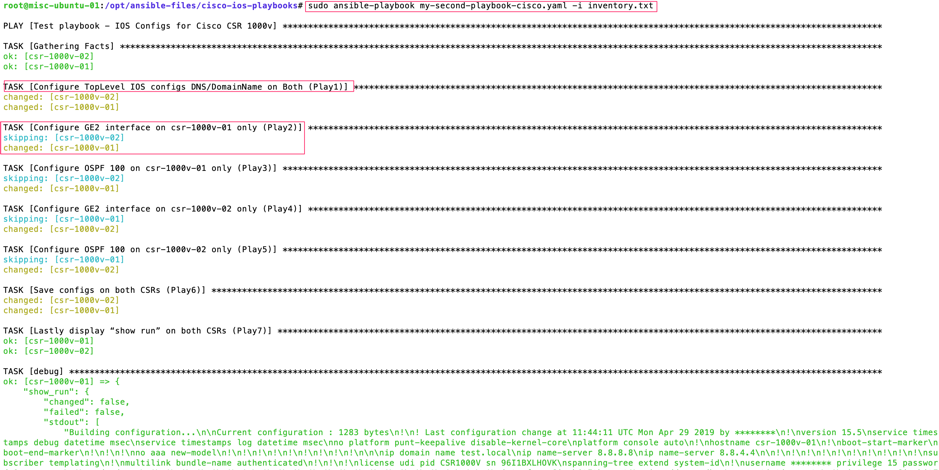

Let’s say – we save the above YAML syntaxes on a file called “my-second-playbook-cisco.yaml”; we can now execute this playbook –

$cd /opt/ansible-cisco-practice

$sudo vi my-second-playbook-cisco.yaml ;copy and paste the above YAML contents

$sudo ansible-playbook my-second-playbook-cisco.yaml -i inventory.txt

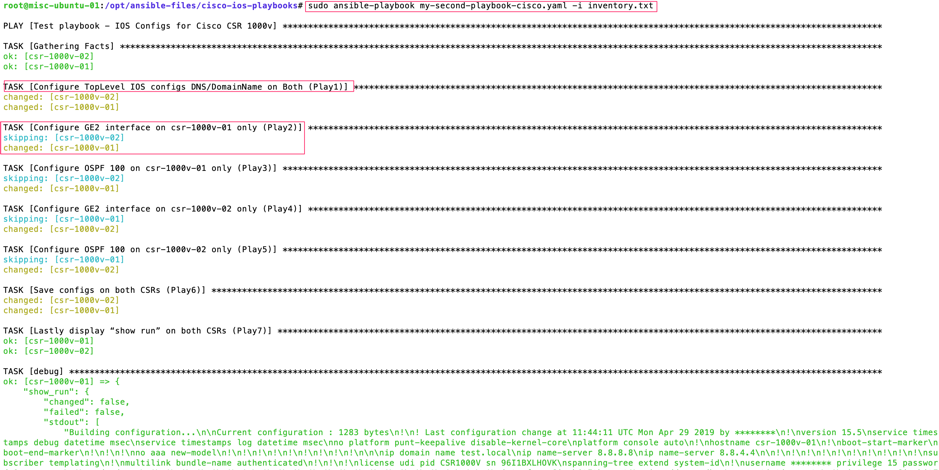

You should see the playbook execution results and “show run”. Screenshot following:

See the “changed” and “skipping” in the output above; this is because we used condition “when”.

Task C: Let’s take a backup of IOS device configs from “show run”

This is very simple; most of YAML syntax are based on the first YAML script “my-first-playbook-cisco.yaml” for this backup job. In this example – we will tell Ansible to save the “register: show_int” output onto a TXT file for both the CSR devices.

-

name: Playbook to backup configs of Cisco CSRs

hosts: csr-routers

connection: local

vars:

cli:

username: cisco

password: cisco

tasks:

- name: Print running config – csr-1000v-01 (play1)

when: ansible_host == "192.168.101.125"

ios_command:

provider: "{{ cli }}"

commands:

- show run

register: show_run_csr-1000v-01

- name: save output to /opt/ios-backups - csr-1000v-01 (play2)

when: ansible_host == "192.168.101.125"

copy:

content: "{{ show_run_csr-1000v-01.stdout[0] }}"

dest: "/opt/ios-backups/backup_{{ ansible_host }}.txt"

- name: Print running config – csr-1000v-02 (play3)

when: ansible_host == "192.168.101.126"

ios_command:

provider: "{{ cli }}"

commands:

- show run

register: show_run_csr-1000v-02

- name: save output to /opt/ios-backups - csr-1000v-02 (play4)

when: ansible_host == "192.168.101.126"

copy:

content: "{{ show_run_csr-1000v-02.stdout[0] }}"

dest: "/opt/ios-backups/backup_{{ ansible_host }}.txt"

Now execute the above playbook; our YAML name file for Cisco IOS back is “cisco-ios-backup-playbook.yaml” –

$sudo mkdir /opt/ios-backups

$cd /opt/ansible-cisco-practice

$sudo vi cisco-ios-backup-playbook.yaml ;copy and paste the above YAML contents

$sudo ansible-playbook cisco-ios-backup-playbook.yaml -i inventory.txt

$cd /opt/ios-backups

$ls -la

You should be able to see two backup files in the directory “/opt/ios-backups”; run the “cat” command to see the contents of these files. Your backups are done!

What is NEXT?

Once you are familiar with Linux and Ansible YAML – you next step on NetOps should be following:

- Learn GIT and GitHub. GIT is source code version control system for your YAML scripts. I will write a separate post on this later.

- Learn Ansible Tower or similar such as Foreman or Ansible Semaphore UI; this will give you huge control over your centralised Ansible orchestration, visibility, control, reporting and many more.

- And obviously learn Ansible advanced details (keep exploring different Ansible modules on official Ansible web site).